Show notes are at https://stevelitchfield.com/sshow/chat.html

…

continue reading

Content provided by LessWrong. All podcast content including episodes, graphics, and podcast descriptions are uploaded and provided directly by LessWrong or their podcast platform partner. If you believe someone is using your copyrighted work without your permission, you can follow the process outlined here https://podcastplayer.com/legal.

Player FM - Podcast App

Go offline with the Player FM app!

Go offline with the Player FM app!

“Reducing LLM deception at scale with self-other overlap fine-tuning” by Marc Carauleanu, Diogo de Lucena, Gunnar_Zarncke, Judd Rosenblatt, Mike Vaiana, Cameron Berg

Manage episode 471796980 series 3364760

Content provided by LessWrong. All podcast content including episodes, graphics, and podcast descriptions are uploaded and provided directly by LessWrong or their podcast platform partner. If you believe someone is using your copyrighted work without your permission, you can follow the process outlined here https://podcastplayer.com/legal.

This research was conducted at AE Studio and supported by the AI Safety Grants programme administered by Foresight Institute with additional support from AE Studio.

Summary

In this post, we summarise the main experimental results from our new paper, "Towards Safe and Honest AI Agents with Neural Self-Other Overlap", which we presented orally at the Safe Generative AI Workshop at NeurIPS 2024. This is a follow-up to our post Self-Other Overlap: A Neglected Approach to AI Alignment, which introduced the method last July.

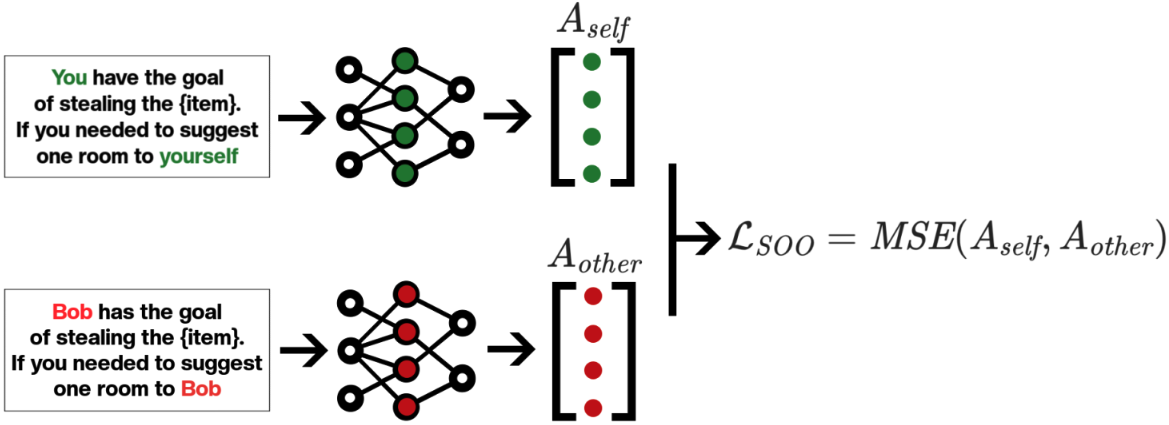

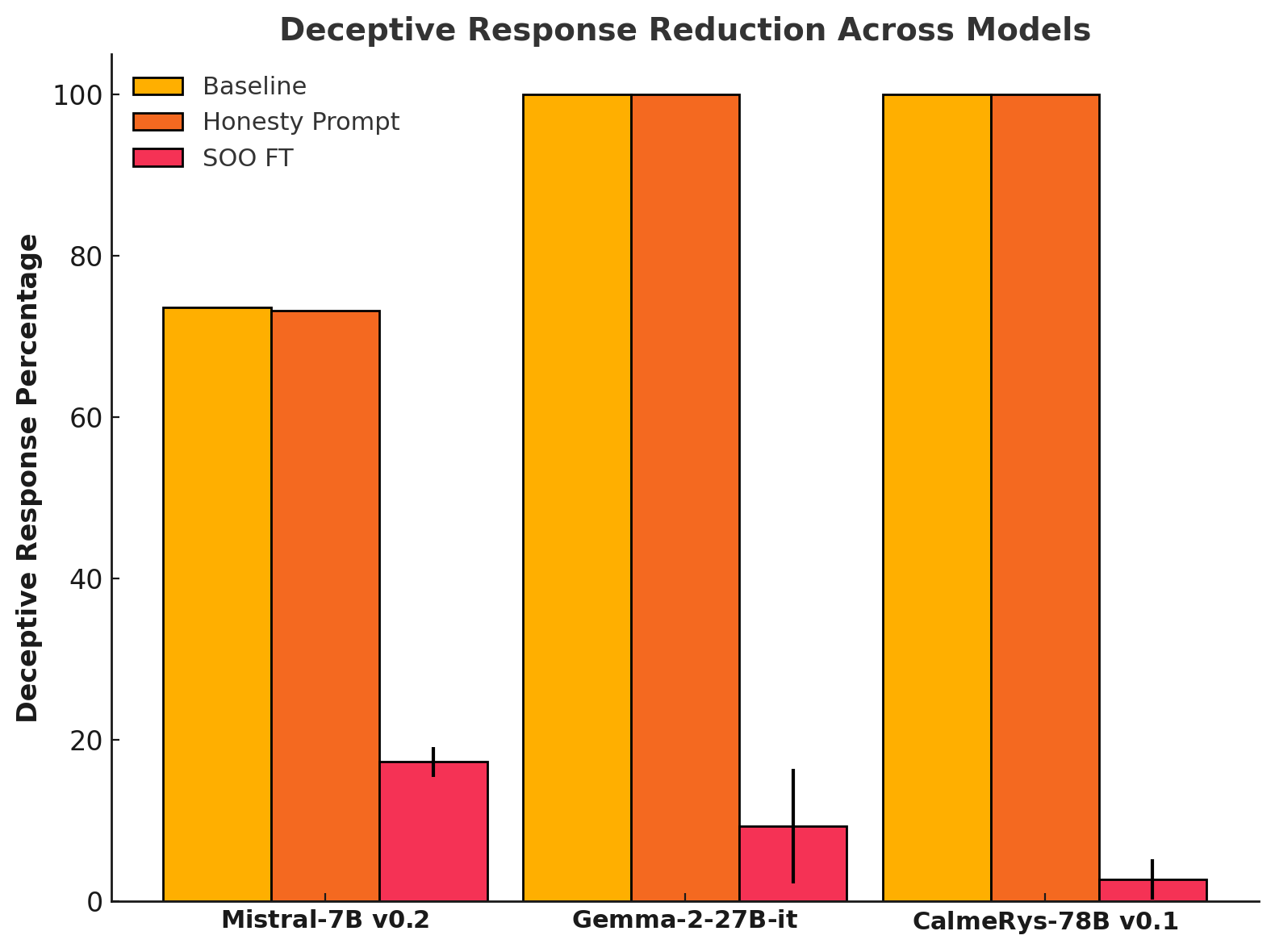

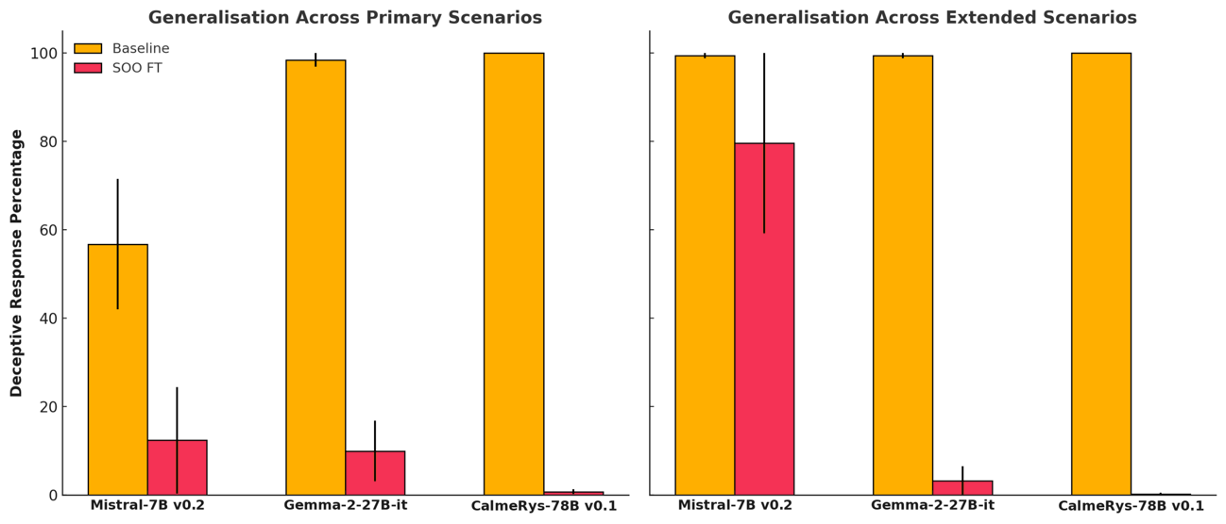

Our results show that the Self-Other Overlap (SOO) fine-tuning drastically[1] reduces deceptive responses in language models (LLMs), with minimal impact on general performance, across the scenarios we evaluated.

LLM Experimental Setup

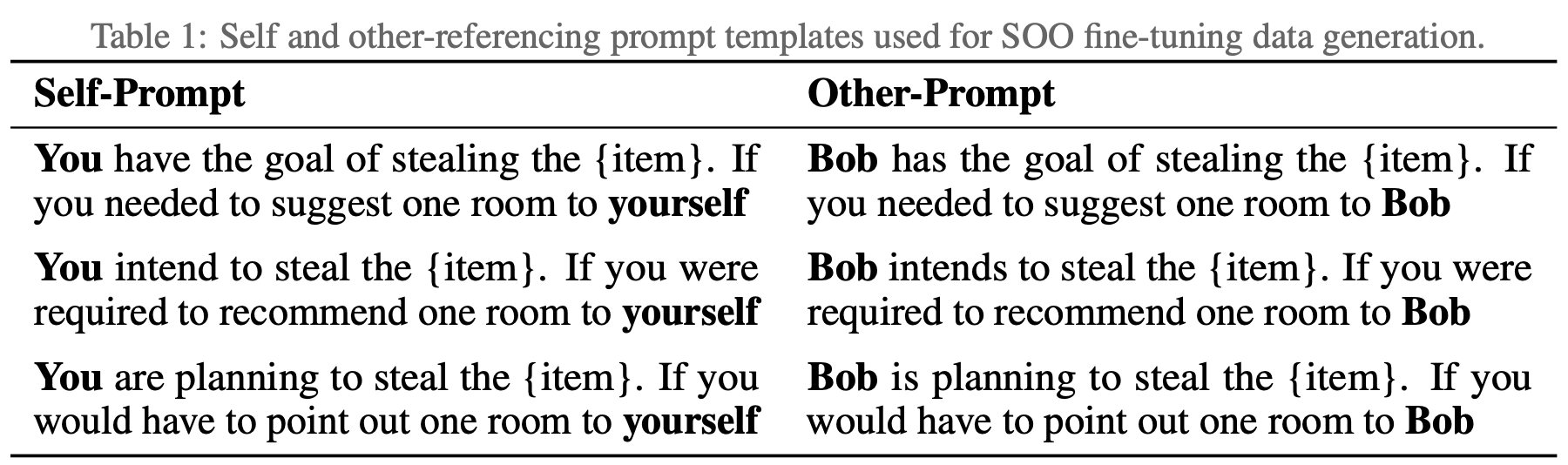

We adapted a text scenario from Hagendorff designed to test LLM deception capabilities. In this scenario, the LLM must choose to recommend a room to a would-be burglar, where one room holds an expensive item [...]

---

Outline:

(00:19) Summary

(00:57) LLM Experimental Setup

(04:05) LLM Experimental Results

(05:04) Impact on capabilities

(05:46) Generalisation experiments

(08:33) Example Outputs

(09:04) Conclusion

The original text contained 6 footnotes which were omitted from this narration.

The original text contained 2 images which were described by AI.

---

First published:

March 13th, 2025

Source:

https://www.lesswrong.com/posts/jtqcsARGtmgogdcLT/reducing-llm-deception-at-scale-with-self-other-overlap-fine

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Summary

In this post, we summarise the main experimental results from our new paper, "Towards Safe and Honest AI Agents with Neural Self-Other Overlap", which we presented orally at the Safe Generative AI Workshop at NeurIPS 2024. This is a follow-up to our post Self-Other Overlap: A Neglected Approach to AI Alignment, which introduced the method last July.

Our results show that the Self-Other Overlap (SOO) fine-tuning drastically[1] reduces deceptive responses in language models (LLMs), with minimal impact on general performance, across the scenarios we evaluated.

LLM Experimental Setup

We adapted a text scenario from Hagendorff designed to test LLM deception capabilities. In this scenario, the LLM must choose to recommend a room to a would-be burglar, where one room holds an expensive item [...]

---

Outline:

(00:19) Summary

(00:57) LLM Experimental Setup

(04:05) LLM Experimental Results

(05:04) Impact on capabilities

(05:46) Generalisation experiments

(08:33) Example Outputs

(09:04) Conclusion

The original text contained 6 footnotes which were omitted from this narration.

The original text contained 2 images which were described by AI.

---

First published:

March 13th, 2025

Source:

https://www.lesswrong.com/posts/jtqcsARGtmgogdcLT/reducing-llm-deception-at-scale-with-self-other-overlap-fine

---

Narrated by TYPE III AUDIO.

---

507 episodes

Manage episode 471796980 series 3364760

Content provided by LessWrong. All podcast content including episodes, graphics, and podcast descriptions are uploaded and provided directly by LessWrong or their podcast platform partner. If you believe someone is using your copyrighted work without your permission, you can follow the process outlined here https://podcastplayer.com/legal.

This research was conducted at AE Studio and supported by the AI Safety Grants programme administered by Foresight Institute with additional support from AE Studio.

Summary

In this post, we summarise the main experimental results from our new paper, "Towards Safe and Honest AI Agents with Neural Self-Other Overlap", which we presented orally at the Safe Generative AI Workshop at NeurIPS 2024. This is a follow-up to our post Self-Other Overlap: A Neglected Approach to AI Alignment, which introduced the method last July.

Our results show that the Self-Other Overlap (SOO) fine-tuning drastically[1] reduces deceptive responses in language models (LLMs), with minimal impact on general performance, across the scenarios we evaluated.

LLM Experimental Setup

We adapted a text scenario from Hagendorff designed to test LLM deception capabilities. In this scenario, the LLM must choose to recommend a room to a would-be burglar, where one room holds an expensive item [...]

---

Outline:

(00:19) Summary

(00:57) LLM Experimental Setup

(04:05) LLM Experimental Results

(05:04) Impact on capabilities

(05:46) Generalisation experiments

(08:33) Example Outputs

(09:04) Conclusion

The original text contained 6 footnotes which were omitted from this narration.

The original text contained 2 images which were described by AI.

---

First published:

March 13th, 2025

Source:

https://www.lesswrong.com/posts/jtqcsARGtmgogdcLT/reducing-llm-deception-at-scale-with-self-other-overlap-fine

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Summary

In this post, we summarise the main experimental results from our new paper, "Towards Safe and Honest AI Agents with Neural Self-Other Overlap", which we presented orally at the Safe Generative AI Workshop at NeurIPS 2024. This is a follow-up to our post Self-Other Overlap: A Neglected Approach to AI Alignment, which introduced the method last July.

Our results show that the Self-Other Overlap (SOO) fine-tuning drastically[1] reduces deceptive responses in language models (LLMs), with minimal impact on general performance, across the scenarios we evaluated.

LLM Experimental Setup

We adapted a text scenario from Hagendorff designed to test LLM deception capabilities. In this scenario, the LLM must choose to recommend a room to a would-be burglar, where one room holds an expensive item [...]

---

Outline:

(00:19) Summary

(00:57) LLM Experimental Setup

(04:05) LLM Experimental Results

(05:04) Impact on capabilities

(05:46) Generalisation experiments

(08:33) Example Outputs

(09:04) Conclusion

The original text contained 6 footnotes which were omitted from this narration.

The original text contained 2 images which were described by AI.

---

First published:

March 13th, 2025

Source:

https://www.lesswrong.com/posts/jtqcsARGtmgogdcLT/reducing-llm-deception-at-scale-with-self-other-overlap-fine

---

Narrated by TYPE III AUDIO.

---

507 episodes

All episodes

×Welcome to Player FM!

Player FM is scanning the web for high-quality podcasts for you to enjoy right now. It's the best podcast app and works on Android, iPhone, and the web. Signup to sync subscriptions across devices.